· engineering · 11 min read

How to Prepare Your Company's Data for AI Automation (Without Creating a Security Nightmare)

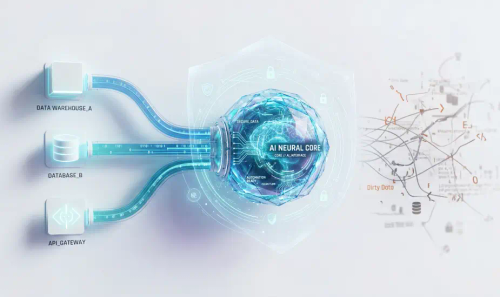

Before your company can use AI, your data has to be clean and secure. Here is how we help businesses organise their messy databases and API silos so they can safely deploy AI agents without accidentally exposing confidential data.

Every few weeks, a client comes to us wanting to “add AI” to their business. They have heard the pitch. They have seen the demos. They are ready to plug a model into their systems and watch the magic happen.

Then we look at their data, and the conversation changes fast.

Most businesses are nowhere near ready for AI automation, and the ones that rush ahead without sorting their data out first tend to create problems that are worse than the ones they were trying to solve. We are not talking about theoretical risks here. We are talking about employees accidentally pasting proprietary source code into ChatGPT (Samsung, 2023), AI research teams leaving 38 terabytes of internal data publicly accessible for three years (Microsoft, 2023), and entire chat histories with API keys leaking from unsecured databases (DeepSeek, 2025).

This post is about what actually needs to happen before AI touches your data, and how we approach that work at WebArt Design.

The gap between AI ambition and data reality

Most companies overestimate how ready their data is. A Gartner survey from late 2024 found that 63% of organisations either lack or are unsure whether they have the right data management practices for AI. IBM’s own research puts it even more starkly: only 29% of technology leaders believe their enterprise data meets the quality, accessibility, and security standards needed to scale generative AI.

Gartner predicts that by the end of 2026, organisations will abandon 60% of their AI projects specifically because they do not have AI-ready data. That is not a model problem or a compute problem. That is a data problem.

And the data problem is usually structural. The average enterprise runs on nearly 900 applications, with only about 28% of them integrated with each other, according to MuleSoft’s 2025 Connectivity Benchmark. That means the remaining 72% are silos, each holding their own version of customer records, transaction histories, and operational data. When an AI agent queries across those silos, it gets inconsistent answers at best and confidential data leaking across trust boundaries at worst.

We see this pattern constantly. A client has their CRM in one system, their invoicing in another, their customer support tickets in a third, and their HR records somewhere else entirely. None of these systems agree on basic things like customer IDs, date formats, or address structures. Before any AI can do useful work across those systems, someone has to reconcile all of that. And that reconciliation process, if handled carelessly, is exactly where security incidents happen.

How AI projects accidentally expose confidential data

The security risks fall into three categories, and we have seen versions of all three in client environments.

Start with employee behaviour. Cyberhaven’s 2026 AI Adoption and Risk Report, which analysed millions of enterprise interactions, found that nearly 40% of AI interactions involve sensitive data. Employees feed confidential information into AI tools roughly once every three days on average. A separate study found that 38% of employees admitted sharing sensitive work information with AI tools without telling their employer. The Samsung incident is the most public example, where engineers pasted semiconductor source code and internal meeting notes into ChatGPT within 20 days of the company allowing its use, but smaller versions of this happen constantly at companies that have not set clear boundaries.

Then there is infrastructure misconfiguration. When Microsoft’s AI research team accidentally exposed 38 terabytes of data in 2023, including passwords, private keys, and over 30,000 internal Teams messages, it was because of a misconfigured Azure storage token. That token had been publicly accessible since July 2020. Nobody noticed for three years. The DeepSeek incident in January 2025 was similar: Wiz researchers found a completely unauthenticated database containing over a million log entries with plaintext chat histories and API keys.

The risk that is growing fastest, though, is shadow AI. IBM’s 2025 Cost of a Data Breach report found that 20% of all breaches involved unauthorised AI tools used by employees. These shadow AI breaches cost an average of $670,000 more than standard breaches, were more likely to compromise customer data, and took longer to detect. Only 34% of the breached organisations had been performing regular audits for unsanctioned AI usage.

The common thread is that none of these incidents were caused by sophisticated attacks. They were caused by organisations that did not prepare their data environment before deploying or allowing AI tools.

What “AI-ready data” actually requires

When we prepare a client’s data environment for AI, we work through a specific sequence. It matters that these steps happen in order, because attempting to normalise dirty data creates compounding errors downstream.

It starts with a full data audit. We catalogue every system that holds data the AI will need to access, every field that contains potentially sensitive information (PII, financial records, health data, employee records), and every integration point where data moves between systems. This is tedious work, but skipping it is how companies end up with AI agents that can query HR salary data when they were only supposed to access support tickets.

From there, we move into data classification. Every dataset, document, and API endpoint gets tagged with sensitivity levels. Automated discovery tools scan for PII, payment card data, and protected health information across structured databases and unstructured repositories like file shares and email archives. That classification scheme becomes the foundation for every access control decision that follows.

Then comes the actual cleaning. We measure quality across six dimensions: accuracy, completeness, consistency, timeliness, validity, and uniqueness. Each gets a specific score. Accuracy, for example, is simply (accurate records divided by total records) multiplied by 100. It is not glamorous, but it is the difference between an AI system that produces reliable outputs and one that confidently gives wrong answers.

The last stage is normalisation and deduplication. Customer records need consistent ID schemes. Date formats need to agree. Address structures need to match. Duplicates need to be merged or flagged. This is where the bulk of the engineering time goes, and it is where we find the most surprising data quality issues. One client had the same customer appearing four times with slightly different spellings of their name, each tied to different transaction histories.

Why middleware is the security boundary, not optional infrastructure

Here is where our approach diverges from what we see a lot of businesses attempt on their own. Many companies try to connect AI tools directly to their databases. They give an AI agent a database connection string and let it query whatever it needs.

This is the single most dangerous architectural decision you can make.

An API middleware layer sits between your AI systems and your data. It is not just a convenience. It is your security perimeter. That layer enforces authentication and authorisation, so the AI agent can only access what it has been explicitly permitted to access. It masks and transforms sensitive fields before the AI ever sees them, redacting or anonymising data at the boundary rather than trusting the model to handle it appropriately. Every data access attempt, whether successful or denied, gets logged with identity, timestamp, and the specific data requested. And when queries involve particularly sensitive data, the middleware can route them to internal models instead of external LLMs, keeping that data off third-party infrastructure entirely.

We have built this kind of middleware for clients operating in regulated industries where getting access control wrong is not just a security issue but a compliance violation. The pattern works the same regardless of scale: the AI agent proposes an action, the middleware checks policy, and only then does the action execute.

Forrester’s 2024 research on AI orchestration middleware found that this approach delivered 47% faster processing and 42% cost reduction compared to direct integration patterns. Those numbers make sense to us. When you centralise your data access layer, you eliminate the duplicated authentication logic, inconsistent data transformations, and scattered logging that plague direct-to-database approaches.

Access control for AI agents is different from access control for humans

Traditional role-based access control (RBAC) was designed for human users who log in, do their work, and log out. AI agents behave differently. They can make thousands of requests per minute, they can chain actions together in ways that individually look harmless but collectively expose sensitive patterns, and they never get tired or suspicious.

OWASP’s Top 10 for LLM Applications (2025 edition) flags “excessive agency” as a top risk, where LLMs are granted too many permissions, access to unnecessary tools, or the ability to take consequential actions without human approval. This is especially relevant now that agentic AI systems are becoming common, where a single prompt can trigger a chain of tool calls, API requests, and data retrievals.

The emerging best practice is what Microsoft’s March 2026 Zero Trust for AI framework calls “segmented AI pipelines.” Rather than giving an AI agent broad access, you break the pipeline into isolated stages: prompt ingress, retrieval, model inference, tool execution, and response egress. Each stage has its own access controls, its own encryption (TLS 1.3 in transit, AES-256 at rest), and its own identity verification. The AI agent authenticates with short-lived tokens, starts every session in read-only mode, and has every tool invocation routed through an external authorisation service.

At WebArt Design, we implement a version of this for every AI deployment. The model can propose actions, but policy decides whether they execute. That separation is what prevents a well-intentioned AI query from accidentally surfacing salary data, medical records, or financial details that the requesting user should never see.

Getting predictive analytics right requires more than clean data

Several of our clients come to us wanting predictive analytics specifically, things like churn prediction, demand forecasting, or lead scoring. The data readiness requirements here are more specific than for general AI automation.

Volume thresholds matter more than people expect. A peer-reviewed 2024 study published in Nature’s npj Digital Medicine found that datasets below 300 records systematically overestimate predictive power. Performance tends to stabilise at around 750 to 1,500 records, depending on the model type. If a client comes to us with a database of 200 customer records and wants churn prediction, we have an honest conversation about whether the data can support what they are asking for.

Labelling quality sets the ceiling on model accuracy. For supervised learning, which powers most enterprise predictive analytics, the labelled data is the ground truth. Even small inconsistencies in how target variables are coded (like “yes” versus “Yes” versus “1” in a churn field) can compromise training. We implement validation checks on labelled datasets before they go anywhere near a model.

Pipeline reliability is the ongoing operational requirement that most organisations underinvest in. A churn prediction model might show 90% accuracy in staging but fail in production because a feature like “days since last login” was calculated differently during training than it is in the live system. We build feature stores that enforce point-in-time consistency, so the model in production sees data in the same format and context as it did during training.

And then there is drift. Over time, the patterns in your data shift. Customer behaviour changes, market conditions move, product offerings evolve. If nobody is monitoring for these shifts, your model degrades silently. We track four types of drift: data drift in input features, concept drift in the relationship between inputs and outputs, prediction drift in output distributions, and label drift. When the Population Stability Index on any monitored feature crosses 0.25, it triggers a retraining cycle.

The practical path forward

The organisations that are succeeding with AI right now are not the ones with the fanciest models. They are the ones that treated data readiness and data security as a single initiative from the start.

If your company is considering AI automation, here is the sequence we recommend. First, conduct a thorough data audit and classification exercise before any AI deployment. Know what data you have, where it lives, who can access it, and what sensitivity level it carries. Second, implement a middleware architecture that enforces least-privilege access and zero-trust boundaries between AI systems and your enterprise data. Do not let AI agents query databases directly. Third, establish continuous monitoring for both data quality drift and unauthorised AI usage. Shadow AI is real, and it is expensive.

The companies that skip the unglamorous infrastructure work and plug AI into raw, fragmented, unclassified data are not just risking project failure. They are building the next generation of data breaches.

If you are thinking about AI automation and want to understand what your data actually needs before you start, talk to us at WebArt Design. We will give you an honest assessment of where you stand and what it will take to get there safely.